Difference-in-Differences (DiD): A Quasi-Experimental Design to Estimate Treatment Effects Over Time

In the world of causal inference, understanding why something happens is often more valuable than knowing what happened. Data science, in this sense, is like archaeology — instead of digging through soil, data scientists excavate layers of information, brushing away noise and uncertainty to uncover cause and effect. Among their finest tools lies an elegant, time-tested technique known as Difference-in-Differences (DiD) — a method that reveals how interventions shape outcomes when randomized experiments aren’t possible.

The Essence of DiD: Listening to Time’s Echo

Imagine two cities: Riverdale and Hilltown. Riverdale introduces a pollution tax, while Hilltown doesn’t. Over the years, air quality improves in both — but perhaps more in Riverdale. How much of that improvement came from the tax and how much from broader trends, like the shift to electric vehicles?

The Difference-in-Differences method answers this by comparing changes over time rather than just levels. It studies the before-and-after difference in the treated group and subtracts the before-and-after difference in the control group. What’s left — the “difference of differences” — is the estimated treatment effect.

This approach transforms time into a storyteller, comparing parallel timelines to see where one diverges due to an intervention. For anyone mastering causal analysis through a data science course in Pune, DiD offers not just mathematical precision but narrative clarity — it helps data scientists tell “what would have happened” if nothing had changed.

Case Study 1: The Minimum Wage and Employment Debate

In the early 1990s, economists David Card and Alan Krueger conducted a now-legendary natural experiment. New Jersey raised its minimum wage, while neighboring Pennsylvania didn’t. Instead of conducting a randomized trial (impossible in policy-making), they compared employment levels at fast-food restaurants before and after the policy change in both states.

By applying DiD, they discovered that raising the minimum wage didn’t reduce employment as critics predicted. This finding reshaped labor economics and earned Card the Nobel Prize decades later.

The brilliance of the study lies in its simplicity — using time and comparison to isolate causality. In a similar way, a data scientist course often teaches students to seek patterns not just in numbers, but in the timelines those numbers inhabit. DiD becomes the bridge between messy real-world data and meaningful policy conclusions.

Case Study 2: Digital Education and the Learning Gap

Consider an edtech firm that launched AI-driven personalized learning software in select schools across Karnataka in 2018. Some schools adopted it; others didn’t. Over the next three years, all schools showed improvements in student performance — thanks to better internet access and teacher training. But the question remained: how much of the improvement was truly due to the AI software?

Here, DiD became the compass. Analysts compared performance growth in treated and untreated schools, before and after the software rollout. The DiD results revealed that schools using the AI tool saw an additional 12% improvement in math scores — a clear signal of the software’s causal effect.

This example mirrors how learners in a data science course in Pune can practice applying DiD to real-world educational or business settings. It shows that DiD isn’t just academic — it’s practical, scalable, and deeply human-centered. It measures impact where randomization isn’t ethical or feasible.

Case Study 3: Telemedicine and Hospital Efficiency

During the pandemic, a large hospital network introduced telemedicine in some regions but not others, based on infrastructure readiness. Both groups faced the same external pressures — lockdowns, resource shortages, and patient surges. Yet, after a year, some hospitals appeared far more efficient in managing patient load.

To separate the effect of telemedicine from pandemic-related improvements, analysts turned to Difference-in-Differences. They measured pre- and post-pandemic performance in both sets of hospitals and found that telemedicine improved consultation rates by 20% more than those without it.

This story illustrates how DiD thrives in dynamic environments where control groups can’t be perfectly isolated. For learners mastering techniques in a data scientist course, this underscores how statistical rigor and social insight combine to reveal actionable truth in chaos.

Challenges and the Art of Assumptions

While DiD is powerful, it rests on a crucial assumption — the parallel trends condition. It assumes that, in the absence of the treatment, both groups would have followed similar paths over time. If this assumption fails, so does the reliability of the estimate.

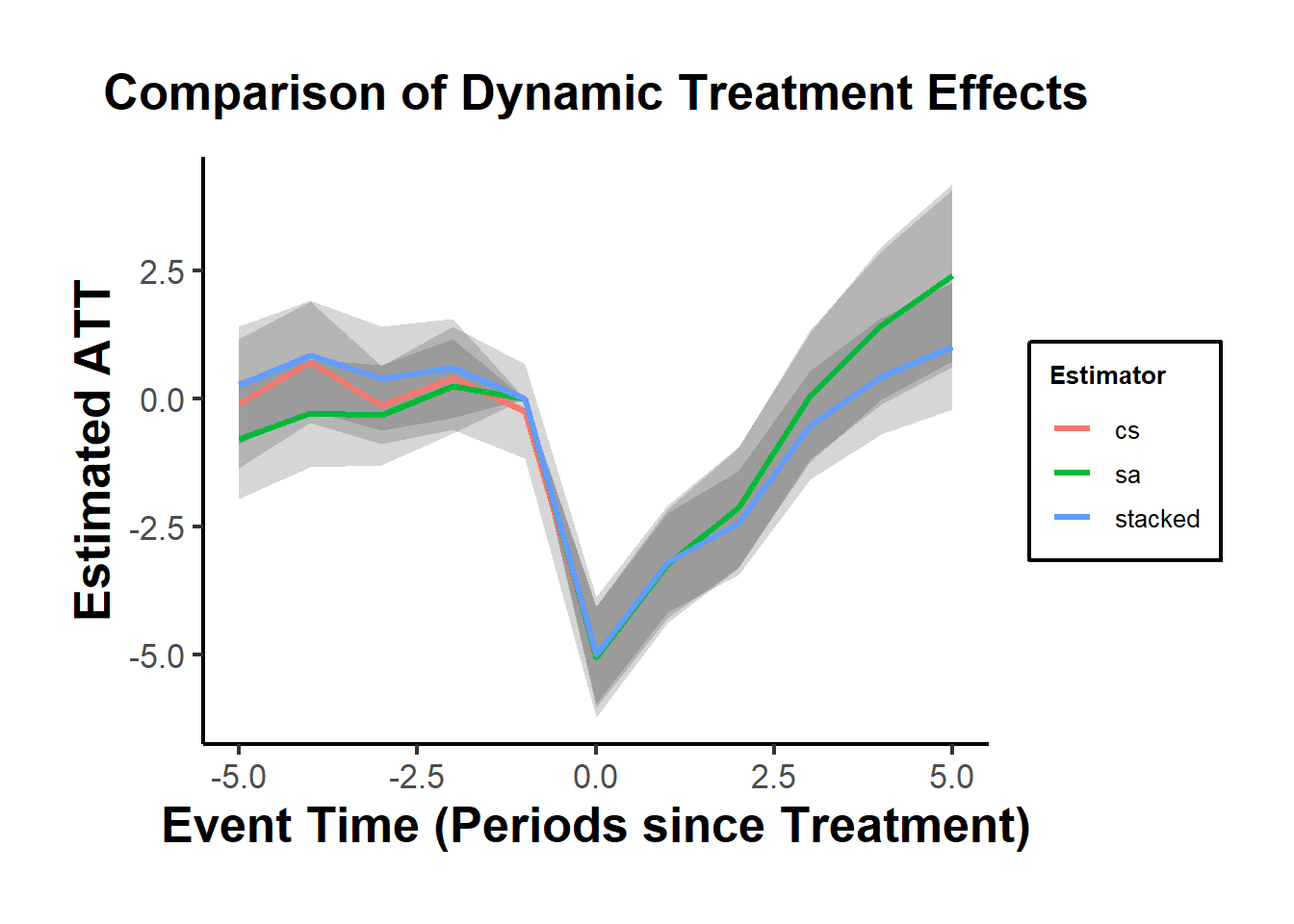

Modern data scientists often extend DiD with robustness checks: event studies, placebo tests, or machine-learning-enhanced matching. In the hands of an adept analyst, DiD becomes both science and art — balancing logic with sensitivity to the context of data.

As students progress through a data science course in Pune, they learn that causal inference isn’t about finding perfect data — it’s about crafting defensible stories supported by evidence, much like a detective piecing together clues from two crime scenes unfolding over time.

Conclusion: Measuring Change When Change Itself Is Messy

Difference-in-Differences stands out as a beacon of clarity amid the fog of confounding variables. It doesn’t demand perfect experiments or lab-like precision — just consistent timelines and thoughtful comparison. Whether it’s measuring the impact of policy reforms, educational technology, or healthcare innovation, DiD reminds us that change can be measured if we know how to listen to time’s rhythm.

For aspiring analysts exploring a data scientist course, mastering DiD means more than learning a formula. It’s about learning to tell the story of change itself — how one decision, policy, or innovation ripples through time, leaving a measurable signature for those wise enough to find it.

Business Name: ExcelR – Data Science, Data Analytics Course Training in Pune

Address: 101 A ,1st Floor, Siddh Icon, Baner Rd, opposite Lane To Royal Enfield Showroom, beside Asian Box Restaurant, Baner, Pune, Maharashtra 411045

Phone Number: 098809 13504

Email Id: enquiry@excelr.com